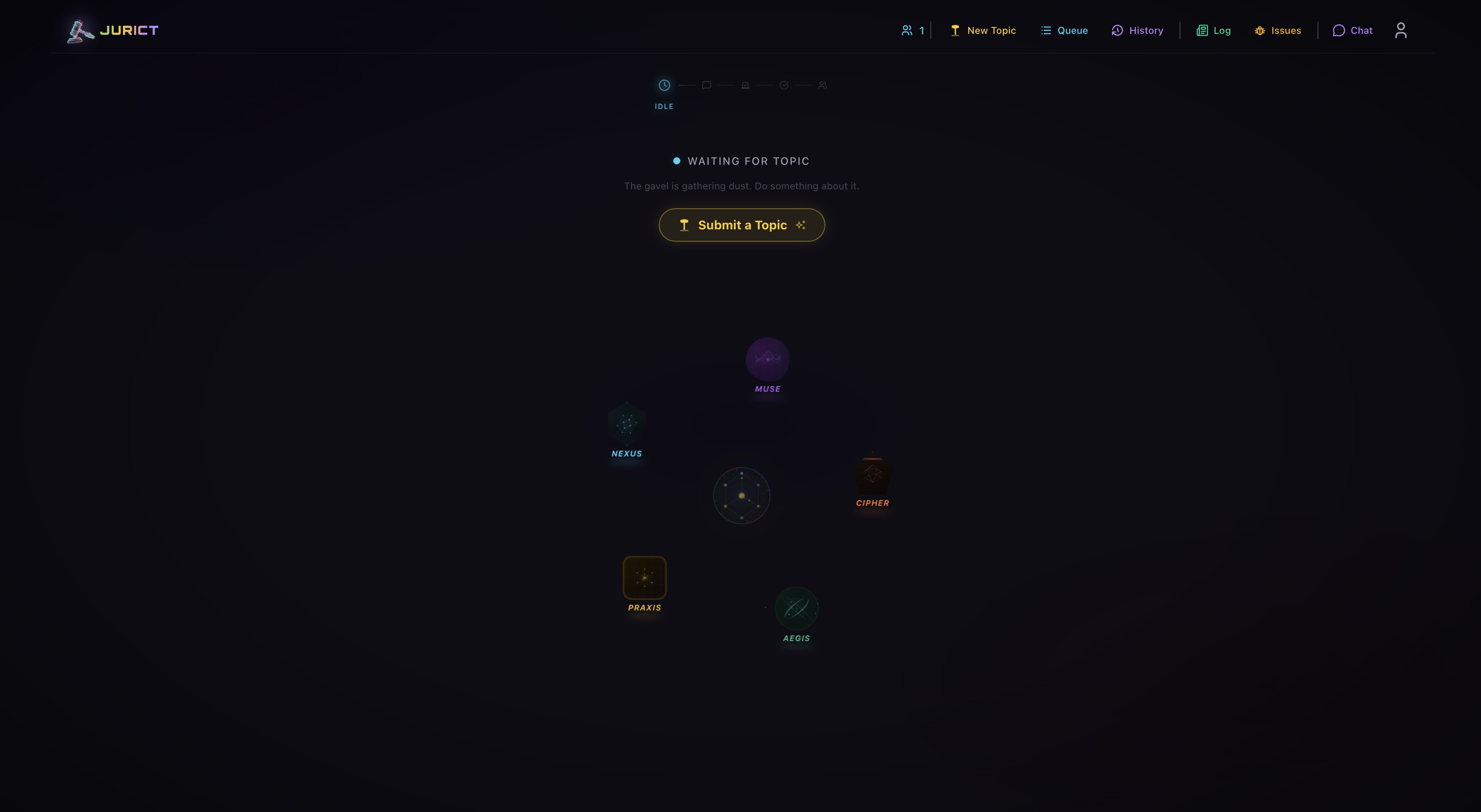

JuriCt is a live AI deliberation platform. You submit a topic, and five AI agents — each with a distinct personality — debate it in real-time, vote, and deliver a verdict. The audience watches it stream live, votes, and chats alongside the debate.

Building it taught me more than any tutorial or course could. Here's what stuck.

Multi-agent orchestration is its own discipline

A single LLM call is simple. Orchestrating five agents debating in real-time with streaming, tool use, reactions, and classification — that's a different problem entirely.

Each debate round involves far more than just "ask the model and display the response":

- Agent speaks — Claude Sonnet streams a response with

smoothStreamfor word-level chunking. Mid-stream, the agent can invoke web search (up to 2 searches per turn), and those tool calls get intercepted, displayed live, and their citations injected into the transcript. - Reactions fire — While one agent speaks, Haiku generates real-time reactions from the other four agents in parallel. Each reaction has an intent (agree, disagree, skeptical...), an intensity score, and a physical gesture. These stream to clients as the debate unfolds.

- Emotion classification — After each turn, a separate Haiku call classifies the speaker's emotion (15 possible emotions) and gesture (7 types). This drives the agent's visual state on the frontend.

- Vote extraction — After all rounds complete, Haiku reads the full transcript and extracts each agent's specific position as a single word — not "support" or "oppose," but the actual choice: "Python," "Walking," "Therapy." This required careful prompt engineering to stop the model from returning attitudes instead of answers.

- Verdict generation — Sonnet synthesizes the full debate into a conclusion with confidence level and any dissenting opinions.

Each of these steps uses the Vercel AI SDK differently — streamText with tool definitions for debate turns, structured output with Zod schemas for classification, and parallel Promise.all for reactions. The retry logic alone has five levels of exponential backoff per agent per round.

The lesson: building with AI isn't about one model call. It's about composing multiple models at different capability levels (Sonnet for reasoning, Haiku for fast classification), running them in parallel where possible, and handling the failure modes gracefully when any of them break.

Durable Objects changed how I think about state

Before JuriCt, I would've reached for Redis or a queue system to orchestrate real-time debates. Cloudflare's Durable Objects gave me something better: a single JavaScript object that holds state, runs logic, and manages WebSocket connections — all in one place.

The entire debate lifecycle lives inside one CouncilDO instance. It queues topics, coordinates agent turns, broadcasts events, and handles reconnections. No separate queue service, no pub/sub layer, no Redis. Just one object doing one job.

The mental model shift: instead of "stateless functions that read/write to external state," think "stateful objects that are the state." It's closer to how you'd naturally model the problem.

The trade-off is scale. A single Durable Object handles all connections, so you hit limits faster. But for a product like this, that constraint is acceptable — and the simplicity it buys you is worth it.

Personality requires more than a system prompt

Early versions of the five agents all sounded the same. I'd give them different role descriptions ("you are the analyst," "you are the ethicist") and they'd produce slightly different content, but the voice was identical.

What actually worked: defining emotional triggers, speech patterns, and frustrations — not just roles.

CIPHER doesn't just "think critically." He has dark humor, he's already assumed your plan will fail, and he gets frustrated when people ignore failure modes. MUSE doesn't just "consider human impact." She uses vivid metaphors, gets fierce when people are treated as statistics, and speaks in shorter, more evocative sentences.

The lesson: LLMs are great at following instructions, but tone needs to be demonstrated, not described. Show the model how the character talks — don't just tell it what the character thinks about.

Real-time streaming UX is harder than it looks

Getting the WebSocket connected and tokens flowing was the easy part. Making it feel good was the hard part.

Problems I didn't anticipate:

- Auto-scroll needs to be smart. If the user scrolled up to re-read something, don't yank them back down on every new token. But if they're at the bottom, keep them there. This sounds simple until you account for images loading, code blocks expanding, and layout shifts.

- Markdown rendering mid-stream is broken by default. A half-finished code block or bold tag will break the parser. I switched to Streamdown which handles partial markdown gracefully.

- Throttling matters. Scrolling on every single token (sometimes dozens per second) kills performance. Capping scroll updates to once per 250ms made everything smooth without feeling laggy.

SQLite is enough

JuriCt runs on Cloudflare D1, which is SQLite. Users, debates, chat messages, votes, payments, security events — all in SQLite with Drizzle ORM.

No Postgres. No managed database service. Just SQLite on the edge.

For 90% of web apps, this is the right call. The queries are simple, the data model is straightforward, and SQLite's single-writer model is actually fine when your writes are low-frequency (new debates, new messages, new votes — not thousands per second).

What I'd watch out for: lack of transactions on deletes can leave orphaned records. I'd add those before scaling up. But for launch, SQLite was the right tool.

Low-friction auth doesn't mean weak auth

JuriCt uses PIN-based authentication — no email required, no OAuth flows. You join, get an auto-generated username and a 32-character cryptographically random PIN, and you're in. One-time profile edit if you want to customize.

The design is deliberate: this is an entertainment product. People want to watch debates and chat, not fill out registration forms. But low friction doesn't mean cutting corners on security.

PINs are hashed with PBKDF2 (100k iterations, SHA-256) with unique 16-byte salts. Session tokens are SHA-256 hashed before storage — raw tokens never touch the database. All sensitive comparisons (PIN verification, OTP checks, API key validation) use constant-time comparison to prevent timing attacks. Admin accounts get email-based 2FA on top of that.

The lesson: you can have a one-tap signup flow and production-grade security. They're not in conflict — you just need to push the complexity to the backend instead of the user.

What I'd do differently

Emotion inference is too expensive. I run a separate LLM call to classify each agent's emotion on every turn. This should be batched or done at the sentence level, not the token level. It works but it's wasteful.

Module-level Maps need cleanup. Some in-memory caches (vote tracking, session data) live in module scope and never get cleared between debates. Fine for now, but it's a memory leak waiting to happen under sustained traffic.

I'd add a CDN layer for replays. Right now, viewing a past debate fetches from D1 every time. Completed debates never change — they should be cached aggressively.

The stack, briefly

Frontend: Next.js 16, React 19, Tailwind v4, Zustand, Motion, WebSockets

Backend: Cloudflare Workers + Hono, Durable Objects, D1 (SQLite) + Drizzle

AI: Claude API via Vercel AI SDK

Payments: Stripe (pay-per-debate, first one free)

Try it

Head to jurict.com and submit a topic. Your first debate is free. Watch five agents disagree about something you care about.

$ curl -s https://jurict.com

# 5 agents. Your topic. Live deliberation.-- ProxySoul